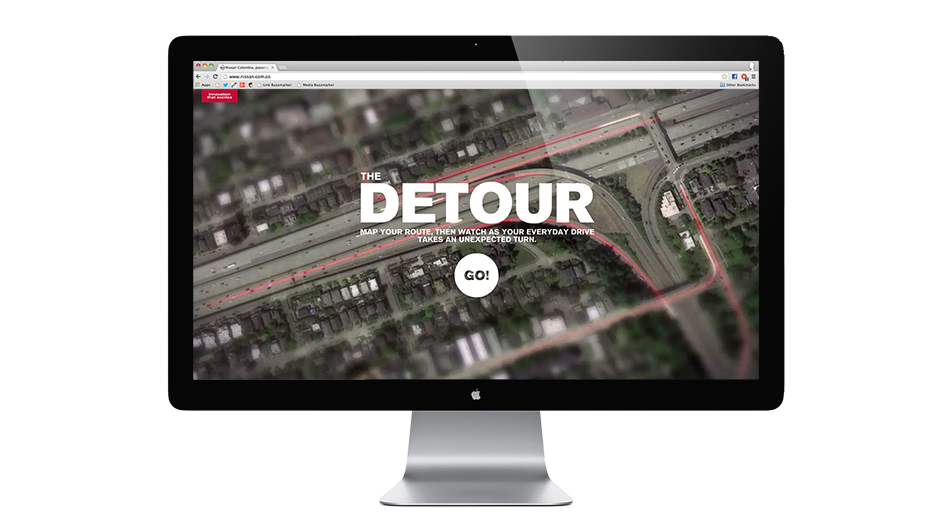

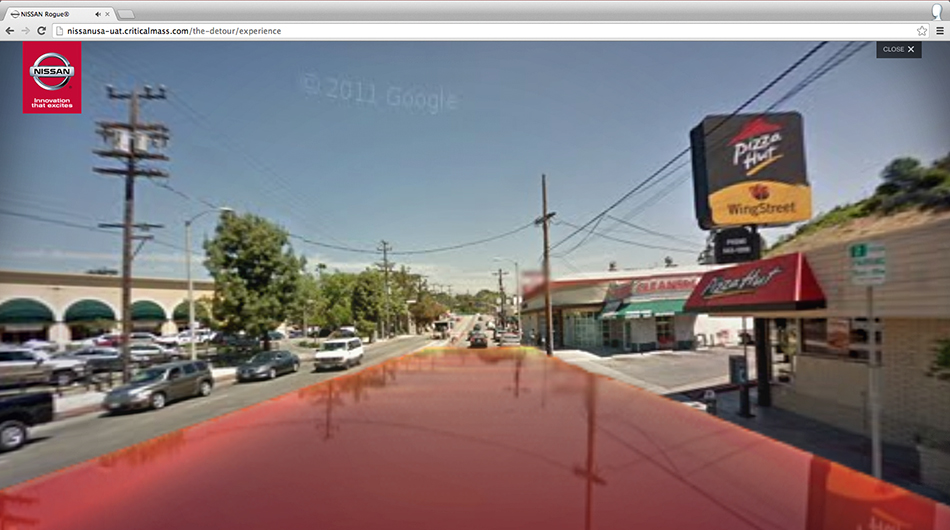

Nissan’s ‘The Detour’ is an interactive driving adventure built inside Google Maps and Street View, in support of the ongoing rollout of the all-new 2014 Nissan Rogue SUV.

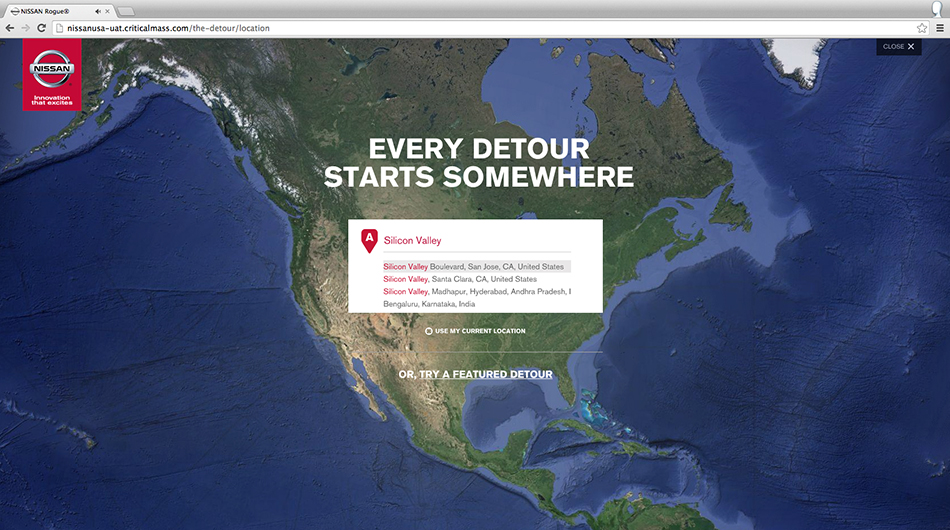

The Detour is a ground-breaking digital browser-based campaign which creates a virtual test drive customised for every visitor. The location is up to you, it can be anywhere in the world.

The project brings together Critical Mass as lead Agency, software from Google, the talents of Academy Award-winning director of photography Guillermo Navarro, cgi from Digital Domain, and a small in-house team of coders, designers and artists here at UNIT9, lead by director Anrick Bregman.

Making the Nissan Rogue experience possible as an HTML5 powered website was a delicate process of problem-solving and innovating; every part of the final experience would include live action, cgi, interface design, music and sound, and data personalised to each user.

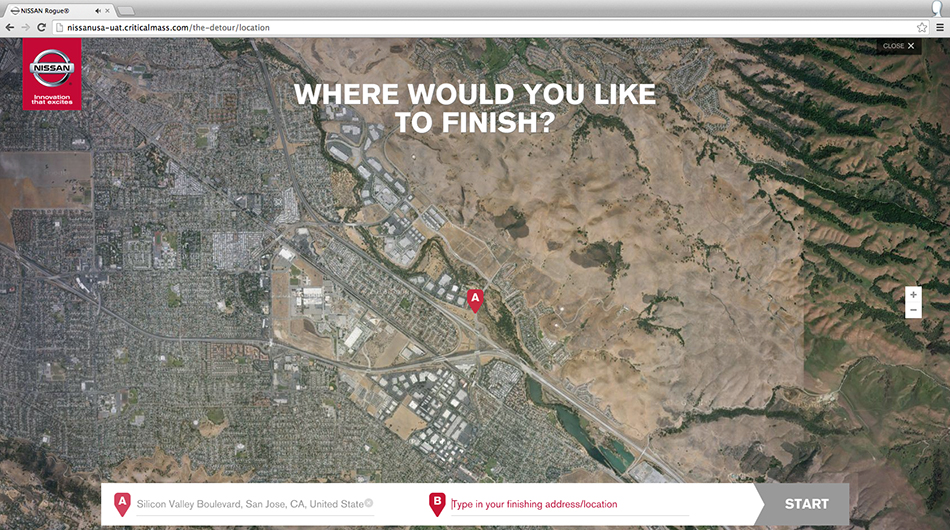

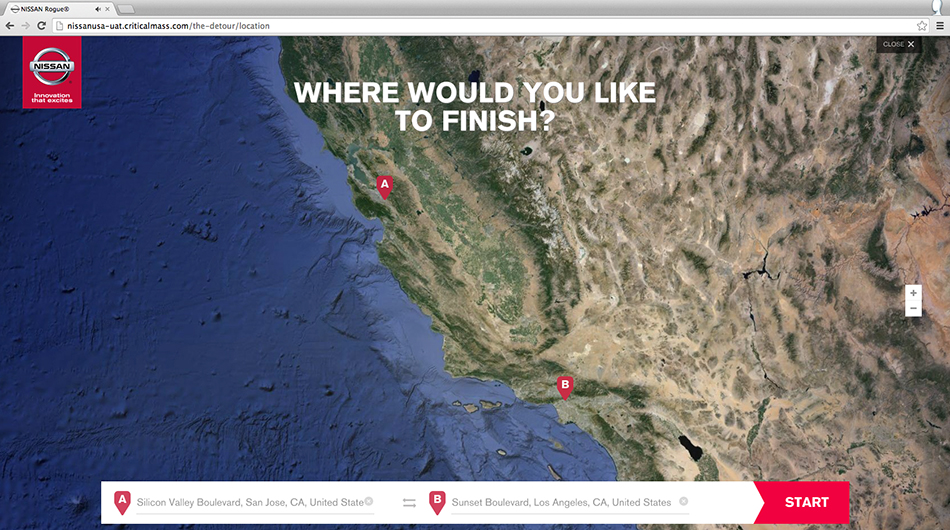

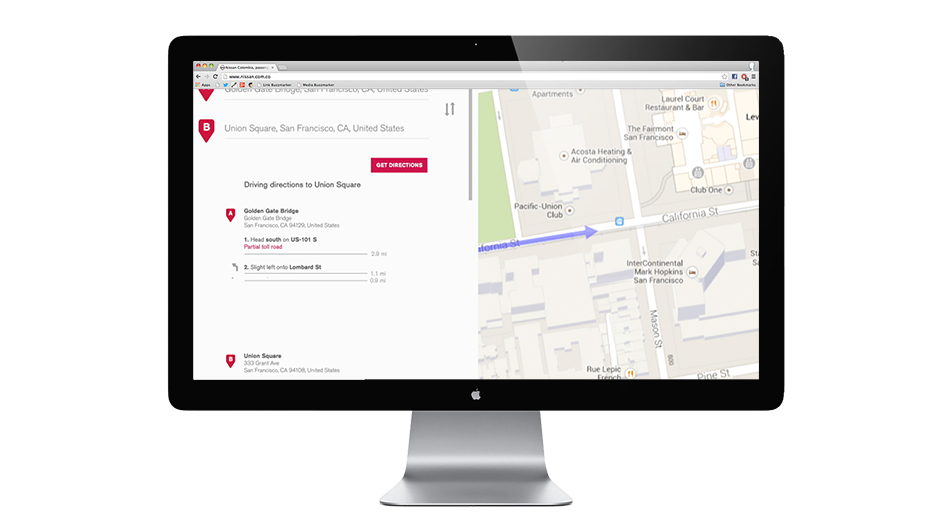

At the base of The Detour’s build are Google Maps and Street View assets taken through the DirectionsService from the Google Maps API v3, and sourced based on two locations somewhere in the world, as chosen by the user.

But the Google assets we’d receive from the API would be static panoramas. Just a single, large image. Just imagine any location seen in Street View, your house, or Fifth Avenue. We only had access to static 360° photos as taken by Google Cars.

Our first challenge therefore was to get a sense of camera movement to happen – to use those panoramas but build on them and make them cinematic. To do this we created a structure that would allow on-the-fly camera movement to be mapped out and rendered, while the user is watching. Lens effects, depth of field here would also help create the right look.

But in addition to a panoramic image, the response returned from Google Maps API also contains comprehensive information about the roads between point A and B.

We used this information to allow our code to ‘cherry pick’ sections on your journey, in other words we divided the users chosen test drive into steps that could be combined to create the feeling of an edited sequence – just like a commercial.

Our system works by firstly checking if the Street View panorama image is available from the start of the destination’s address – using Street View Service.

We then map out a full route, matching the journeys turns, straight roads and intersections with all of our car shots.

We filter and remove steps which the system can’t use or which generate an unwanted result to the users’ chosen journey.

For example, we removed steps that were in tunnels to keep the shots street level and consistent.

Unfortunately Google does not always have steps described in the same manner so a two level filtering system was performed based on a manoeuvre property and by searching for the given substrings/words in the instruction.

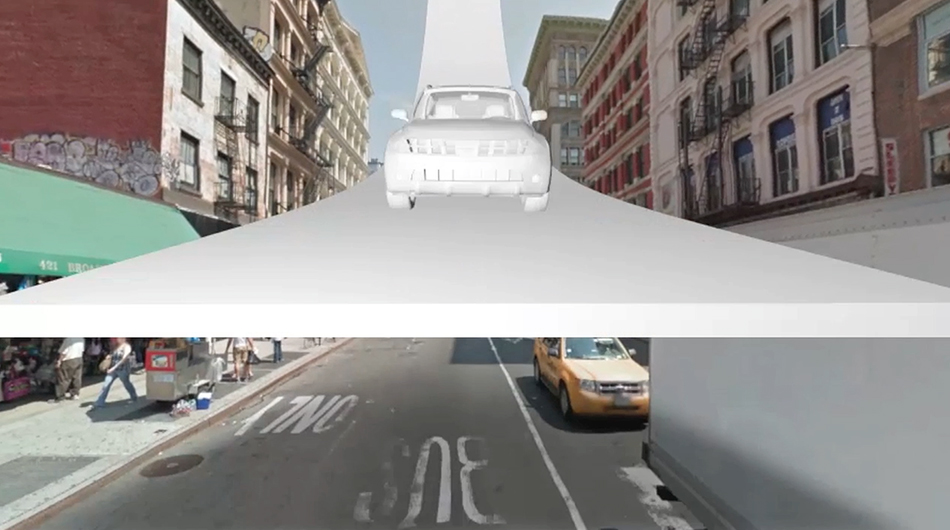

Once the journey was mapped out within Google Maps, we had to add the car on top. Right from the start of the project, long before we started coding, we worked very closely with Digital Domain. The Nissan Rogue would have to be both shot on location in San Francisco, as well recreated fully in cgi.

It took a lot of careful planning. The car footage would be superimposed on top of our Google Street View and Maps backdrops, on a shot by shot basis.

With so many different streets out there it was a very tough task for us to create a series of car animations that would work literally everywhere.

We also had to match the camera angle of any given Street View asset to the render of the car.

But much more important and complicated for us would be to accurately track the cameras movements convincingly – for both layers, the car and backdrop, any movement of the camera would have to match picture perfectly.

We knew that if either the cameras position or the cameras movement would be different even marginally, between two layers, then the spell would be broken.

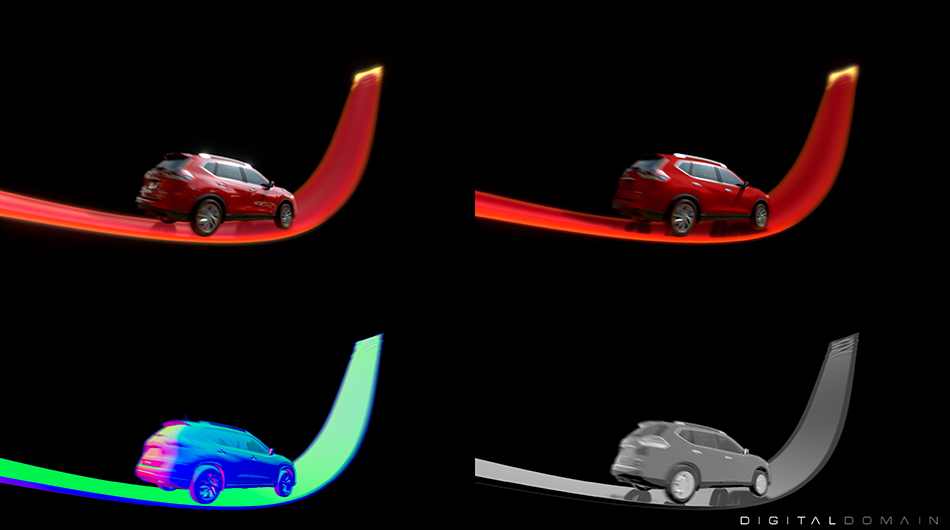

Once both layers were in place, we had our next challenge facing the team.

How do we make these two layers look the same,how do we being them into the same colour and light space?

The Street View and Maps images vary hugely in colour and saturation – depending on your location. Arizona is bright and yellow, while New York is more muted and blueish.

We would have to create a consistency between these different locations, so that our car footage on top would always look right, by adding a consistent specific colour values.

But in addition to ‘standardising’ the assets we also wanted to add a magic touch, some post-production effects.

All assets would have to be carefully arranged to appear as one single visual with the quality of a high-end commercial.

We started this process by applying traditional post-production techniques across a wide range of Street View images, to find the right universal grade.

Working within the tight limits of HTML5’s capabilities, we used very simple techniques: colour, saturation, and a number of very subtle coloured overlays were all applied to our sampling of Street View locations.

We then translated this universal grade into a code-only version – a server-side adjustment applied in real-time, to ‘glue’ each of the layers together.

In addition to colours, we also looked at other coded effects we could use to make the layering as invisible as possible.

We started looking at using a subtle camera shake whenever the car would pass by the camera very closely. Camera shake was applied in code to both the background and foreground layers, making the illusion just a little more convincing, as if the car is really there.

We also built real time reflections throughout the tracking of Street View scenes, as the Rogue surface had a reflectivity, and so did our red road surface.

The heavy mathematical operations needed to double up on each Street View assets, flip it and use it as a dynamic source to create natural reflections as the Rogue drives your route were an essential illusion to give the appearance that our Detour test drive happens in the real world, with a high-end attention to detail.

All of this had another complicating dimension to it: how can we provide an acceptable level of smooth playback, for all users? All of these effects, applied to two dynamic layers of full-screen, HD video is no easy task.

Working in HTML5 still means a very limited access to the true power of modern browsers. And anyway making The Detour work for as many people as possible, regardless of their computer setup, was a big priority for us.

After a process of research and prototyping, our concerns based around the ability to display videos with transparency channels on top of the Google Street View panoramas was a first issue we had to tackle.

It is hard to believe, but true, that video transparency is not supported across all of the latest browsers.

Our approach was to draw an image sequence within the HTML5 canvas. But this created an enormous network traffic overhead which would have never been acceptable for users our our client, with good reason.

Further optimization was required, and in this case the decision was made to exchange the large png images used for all the Nissan Rogue renders with a small collection of sprite sheets – large images that combine multiple video sequence frames by placing them next to one another in a kind of grid.

Eventually the asset loading time was decreased dramatically.

For those interested in the details, here’s an overview on our technology solutions across the project.

– Decreased number of image loading HTTP requests by 97% (from 479 to 12)

– Reducing the size of the assets by 99%

– Image scaling – 50% for primary images and 25% for alpha images

– Compression per sprite sheet using tinyPNG: 80% compression

– Metadata is included per sprite sheet instead of per image.

Working with the music of M.I.A was an awesome bonus for us, we love her style but on a more practical level, her track for The Detour was also a really great composition to work with. Our main challenge with the sound-side of the project was two fold.

Firstly, creating a perfectly seamless transition into the slow-motion interactive section of the project was a tricky challenge. It required us to re-edit the music track so that the viewer goes smoothly into, and out-of, an infinite audio loop while they play with the Nissan Rogue in slow-motion.

We used several layers of sound and music here to create an inaudible cut, and worked various parts taken from the original track to create a bespoke edit that would sound just perfect.

In the interactive section of The Detour you can scrub video forwards and backwards. For us what would really make this look and feel right is the sound treatment for that ‘scrubbing’. Sound is such an important part of our world and allowing people to play with sound, moving it slowly, reversing it, or speeding it up is very rewarding.

So we composed our interactive music and sound approach in several layers – with multiple versions of the audio playing simultaneously so that at all times during the scrubbing section you have sound playing which responds to what you do.

Secondly, what is always very complicated from a technology perspective is to work with sync-sound in an interactive context.

Nissan Rogue looks and feels like a commercial, but with one key difference; you the user can choose where that commercial takes place. Making that seem simple for all people on the internet took a lot of collaborating by many talented people, working across the globe at different parts of the whole.

From the driveway to the interstate highways, there are an infinite number of journeys to experience from the driver’s seat of the Rogue.

At the end of each drive, users can find their local dealership and schedule a test drive, download the MIA soundtrack on NissanUSA.com, or invite people to try it out by sharing the site.

Credits

-

Division

-

Director

-

Agency

-

Brand

-

Director

-

Producer

-

Project Manager

-

Tech Lead

-

Front End Developer

-

Front End Developer

-

Front End Developer

-

Front End Developer

-

Sound

-

UX

-

Design

-

Animation

-

Special Thanks

-

Special Thanks

-

Special Thanks

-

Special Thanks

-

Music

-

Technology

-

Platform

-

Kind

-

Industry

-

Target Market

-

Release Date

2014-02-06