Destination BC: The Great Wilderness Car Wash

Destination BC: The Great Wilderness Car Wash Luke & JosephThe Royal Mint: Forever Metal

Luke & JosephThe Royal Mint: Forever Metal LEGO x Pharrell Williams: Pinball Experience

LEGO x Pharrell Williams: Pinball Experience Alexander BrownHarbin Beer: NBA FOOH Drone Show

Alexander BrownHarbin Beer: NBA FOOH Drone Show Hannah NeilFanta: Beetlejuice Afterlife Train

Hannah NeilFanta: Beetlejuice Afterlife Train Luke & JosephSky: Up

Luke & JosephSky: Up Hal KirklandUber: On Our Way

Hal KirklandUber: On Our Way Zofia MackiewiczSYKY on Apple Vision Pro

Zofia MackiewiczSYKY on Apple Vision Pro Dove x Pinterest: Real Beauty DNA

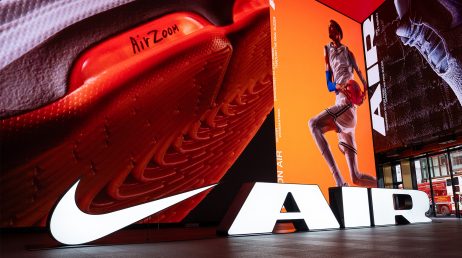

Dove x Pinterest: Real Beauty DNA Sean PruenNike: Win On Air at Outernet London

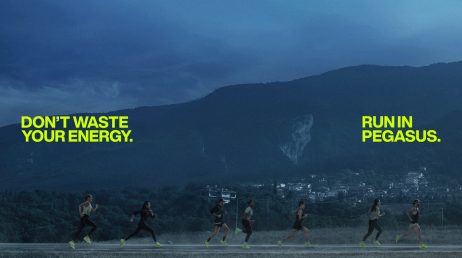

Sean PruenNike: Win On Air at Outernet London Nike Pegasus Relay

Nike Pegasus Relay Coca-Cola: The Fresco

Coca-Cola: The Fresco Luke & JosephSky News

Luke & JosephSky News Simon NealStök Cold Brew: The Morning After Promotion at Wrexham

Simon NealStök Cold Brew: The Morning After Promotion at Wrexham MTV: Acknowledge, Support, Keep-in-Touch (A.S.K.)

MTV: Acknowledge, Support, Keep-in-Touch (A.S.K.) Sean PruenThe Pacha Group: Pacha Ibiza

Sean PruenThe Pacha Group: Pacha Ibiza Hyundai: Experiment N

Hyundai: Experiment N A Common FutureNike Football: Phantom GX II Haaland ‘Force9’

A Common FutureNike Football: Phantom GX II Haaland ‘Force9’ Sean PruenFIVE Hospitality: FIXE LUXE, SENSORIA

Sean PruenFIVE Hospitality: FIXE LUXE, SENSORIA Luke & JosephMake the Change for Kidney Health

Luke & JosephMake the Change for Kidney Health Hal KirklandAlcon: Systane

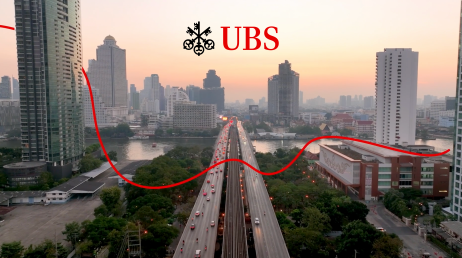

Hal KirklandAlcon: Systane UBS Asset Management: The Red Thread Global Themes and Outlook

UBS Asset Management: The Red Thread Global Themes and Outlook smart: 1# Platinum Edition

smart: 1# Platinum Edition Sean PruenAston Martin F1: AMR24 Launch Film

Sean PruenAston Martin F1: AMR24 Launch Film Hannah NeilMeta x Puma: World’s Smallest Gym

Hannah NeilMeta x Puma: World’s Smallest Gym Vizio: Watch Us

Vizio: Watch Us 'If' Movie Premiere with Ryan Reynolds

'If' Movie Premiere with Ryan Reynolds Netflix: Sonic Shatterverse Experience

Netflix: Sonic Shatterverse Experience Zofia Mackiewicz, Alexander BrownWallbox: VFX Stunt

Zofia Mackiewicz, Alexander BrownWallbox: VFX Stunt Zlaten del CastilloMountain Dew: Raid

Zlaten del CastilloMountain Dew: Raid Nerds: Super Bowl Teaser

Nerds: Super Bowl Teaser Zofia MackiewiczFanta: Parade of Play

Zofia MackiewiczFanta: Parade of Play Jakub JakubowskiRiyadh Season Treasure

Jakub JakubowskiRiyadh Season Treasure Mirinda: AI Flavour Generator

Mirinda: AI Flavour Generator Sky Broadband: Play Like A Pro

Sky Broadband: Play Like A Pro Kate Lynham, Hannah NeilMeta: Rugby World Cup Experience 2023

Kate Lynham, Hannah NeilMeta: Rugby World Cup Experience 2023